Our Tag: AI News Collection

Explore all our latest insights, tutorials, and announcements on AI workflow and tech.

The End of Token-Cost Anxiety: Why LFM2 is the Most Cost-Effective Path

Strategic Cost OptimizationIn the 2026 AI News landscape, "Token Fatigue" is real. Businesses are tired of unpredictable cloud bills. Scalexa is now recommending the LFM2 hybrid model as a way to decouple growth from API costs. Because LFM2 is 3x more efficient to train and 2x faster to run on standard CPUs, it offers the most cost-effective path to building general-purpose AI systems. At Scalexa, we build "Liquid-Native" web apps that run AI locally in the browser or on-premise, eliminating the per-token tax entirely. This creates a psychological sense of "Digital Ownership" for our clients. Scalexa is your architect for an AI future that is not just smarter, but fundamentally more sustainable and profitable. Catch the full analysis on Scalexa AI News.

Vision-Language Breakthroughs: Real-Time Image Analysis with LFM2-VL

Seeing at the Speed of LiquidA major headline in AI News is the release of LFM2-VL, a vision-language model designed for low-latency edge deployment. Unlike traditional vision models that upscale and distort images, LFM2-VL uses intelligent patch-based handling to process resolutions up to 1024x1024 instantly. Scalexa is leveraging the LFM2-VL capabilities to build real-time monitoring and quality control systems for manufacturing clients. The psychological advantage of "Real-Time Sight" is immense; it allows for immediate course correction rather than retrospective reporting. Scalexa turns these vision models into your brand’s "digital eyes," ensuring your operations are as observant as they are intelligent. Stay tuned for more vision-tech updates at Scalexa.in AI News.

LFM2 vs. Llama 3.3: The Battle for the Pareto Frontier

Choosing Efficiency Over HypeIn this week’s AI News, the debate centers on the "Pareto Frontier" of AI—the perfect balance between quality and speed. While Llama 3.3 is a powerhouse, the LFM2 series dominates in prefill and decode throughput, especially on non-GPU hardware. At Scalexa, we’ve benchmarked these models and found that for math-heavy and long-context tasks, LFM2’s hybrid LIV (Linear Input-dependent Variable) operators provide a significant edge. Psychologically, this "Constant-Time" inference reduces the anxiety of scaling; your costs stay predictable even as your data grows. Scalexa helps you navigate these benchmarks to choose the engine that actually fits your hardware reality. Follow the latest technical reviews on Scalexa AI News.

Memory Efficiency in 2026: Scaling to 24B Parameters on a Laptop

High-Capacity, Low FootprintOne of the most impressive AI News stories this year is the LFM2-24B-A2B model. Using a Sparse Mixture-of-Experts (MoE) design, it active only 2B parameters per token, allowing a massive 24B model to fit into just 32GB of RAM. At Scalexa, we’ve found that this "Lean Intelligence" is a game-changer for B2B firms that handle sensitive data. You no longer need a $10,000 server to run enterprise-grade reasoning; you can run the LFM2-24B model via Ollama on a standard workstation. Scalexa specializes in optimizing these local deployments, ensuring you get maximum "Cognitive Density" without the high cloud costs. Explore how Scalexa is democratizing high-end AI in our AI News section.

The Liquid Revolution: Why LFM2 is the End of "Laggy" On-Device AI

Speed as a Psychological BarrierIn the fast-moving AI News cycle of 2026, we’ve seen that the biggest hurdle to AI adoption isn't intelligence—it's latency. Users subconsciously disengage when an AI "stutters." Liquid AI’s new LFM2 Ollama model solves this by using a hybrid architecture that delivers 2x faster decode speeds on standard CPUs. At Scalexa, we’ve integrated LFM2 into local business workflows to remove the "wait time" that kills productivity. When your AI responds as fast as a human colleague, the psychological barrier to collaboration disappears. Scalexa helps you deploy these "Liquid" models to ensure your team stays in the flow, turning raw speed into a measurable competitive advantage. Stay updated on the latest shifts at our AI News hub.

Self-Correcting Code: Using MiniMax-M2.7 to Eliminate Technical Debt

Architecting for LongevityIn the 2026 AI News landscape, "Vibe Coding" has evolved from a hobby into a sustainable production practice. Scalexa is now leveraging MiniMax-M2.7 to build "Self-Correcting" web applications. Because M2.7 can autonomously analyze logs and propose causality-based fixes, it effectively acts as a 24/7 senior developer for your site. This reduces the psychological burden of "launch day anxiety," knowing that your system has the intelligence to recover from online incidents with minimal human intervention. You can explore the MiniMax-M2.7 Ollama integration to see how it handles complex engineering systems on Terminal Bench 2. Scalexa turns this self-evolving tech into a competitive advantage for your business, building websites that are not just beautiful, but fundamentally resilient. Catch the full story at Scalexa.in AI News.

Reducing the Hallucination Gap: How M2.7 Achieved the "Omniscience Index"

The Reliability RevolutionA recurring concern in AI News has always been the "Hallucination Fear"—the risk of AI confidently stating falsehoods. MiniMax-M2.7 has addressed this head-on, achieving a massive leap in the "AA-Omniscience Index" compared to its predecessor. At Scalexa, we’ve observed that M2.7’s self-feedback loops allow it to catch its own errors before they ever reach the user. This creates a level of "Psychological Safety" for businesses that were previously hesitant to deploy AI in high-stakes office scenarios like Excel auditing or PPT generation. By using the MiniMax-M2.7 model on Ollama, you are investing in a system that prioritizes truth over speed. Scalexa specializes in deploying these low-hallucination models to protect your brand's credibility while maximizing operational efficiency. For more on AI reliability, visit our AI News section.

MiniMax-M2.7 vs. Gemini 3.1: The Battle for Open-Source Reasoning Dominance

Benchmarking the BreakthroughIn this week’s AI News, MiniMax-M2.7 is making waves for tying with Google’s Gemini 3.1 in autonomous ML benchmarks. At Scalexa, we have tested M2.7’s performance in real-world software engineering, where it achieved a staggering 56.22% on SWE-Pro. What makes M2.7 psychologically superior for developers is its "Vibe-Pro" capability—an aesthetic and functional understanding of WebDev and AppDev that feels more human than robotic. You can run this powerhouse via the official Ollama library to experience its multi-language coding mastery in Rust, Go, and TypeScript. Scalexa helps you choose between these giants, ensuring you don't just follow the hype, but invest in the model that actually "thinks" the way your business needs. Stay updated with our AI News blog for deep-dive technical comparisons.

Agent Teams and Memory: Navigating Complex Workflows with MiniMax-M2.7

The End of Single-Prompt LimitationsAs reported in recent AI News, MiniMax-M2.7 has redefined the concept of "Agent Teams." Instead of one bot trying to do everything, M2.7 can coordinate specialized roles to solve multi-stage engineering problems. At Scalexa, we’ve integrated these "Harness" workflows to handle end-to-end project delivery with a 97% skill adherence rate. Psychologically, this solves the "Hand-off Anxiety" that occurs when humans have to bridge the gap between different AI tasks. With the Ollama MiniMax-M2.7:cloud integration, your team gains a persistent memory layer that keeps the context of a 200,000-token project perfectly intact. Scalexa ensures that your digital agents work together as a cohesive unit, allowing you to focus on high-level strategy while the "Agent Team" handles the execution. Check out the latest trends at Scalexa AI News.

The Self-Evolution Milestone: Why MiniMax-M2.7 is Different from Every Other AI

The Model That Built ItselfIn the latest AI News for March 2026, the spotlight has shifted to MiniMax-M2.7. While most models are passive recipients of data, M2.7 is "self-evolving"—it actually participated in 30% to 50% of its own development workflow by debugging its own code and optimizing its own training loops. At Scalexa, we see this as a psychological turning point: we are moving from "tools we use" to "systems that improve themselves." By leveraging the MiniMax-M2.7 Ollama model, businesses can tap into a level of autonomous reasoning that matches GPT-5.3-Codex. This reduces the "Management Tax" on leadership, as the AI takes on the burden of its own maintenance. Scalexa helps you integrate these self-improving systems into your core operations, ensuring your technical debt doesn't just stop growing—it starts shrinking. Explore more on our AI News page.

Hallucination Zero: How MiniMax-M2.7 Solves the "Trust Gap" in B2B AI

A Massive Leap in OmniscienceThe most critical update in 2026 AI News regarding MiniMax is its success in slashing hallucination rates. M2.7 achieved a massive jump on the AA-Omniscience Index, moving from a negative 40 (M2.5) to a positive score, with a hallucination rate of only 34%—significantly lower than many of its global competitors. At Scalexa, we know that the biggest psychological barrier to AI adoption is the "Hallucination Fear." If you can't trust the output, the tool is useless. By utilizing M2.7's deep context-gathering—where it "reads extensively before writing"—Scalexa builds automation workflows that are grounded in fact, not fiction. We provide the technical guardrails that turn AI into a reliable business partner. When your systems are this accurate, you stop worrying about the "what if" and start focusing on the "what's next." Scalexa is where technical speed meets human-level trust.

Building Agent-Ready Ecosystems with MiniMax-M2.7 and Scalexa

From Web Pages to Web SystemsAs AI News reports, the arrival of M2.7 marks the end of "Isolated Apps" and the beginning of "Integrated Ecosystems." MiniMax-M2.7 is natively optimized for multi-agent collaboration, allowing it to act as a data analyst, macro analyst, and web engineer simultaneously. Scalexa leverages this multi-role capability to build interactive web systems that don't just display data but "understand" the project code in real-time. Whether it's generating full PowerPoint presentations from Excel sheets or providing interactive dashboards via Streamlit, M2.7 ensures your web platform is a living, breathing productivity hub. At Scalexa, we integrate these complex skillsets into your custom build, reducing cognitive load for your team and creating a frictionless user experience that feels like magic. Scalexa is your partner in building the next generation of Agentic Web Platforms.

MiniMax-M2.7 vs. GPT-5.3: A Cost-Efficiency Breakdown for 2026

Frontier Intelligence at One-Third the CostIn this week’s AI News, the debate centers on the economics of intelligence. While GPT-5.3 remains a heavyweight, MiniMax-M2.7 is making waves by delivering equivalent reasoning power at less than one-third the operational cost. With an Elo score of 1495 on GDPval-AA, M2.7 has become the highest-rated open-source-accessible model for professional document processing. At Scalexa, we’ve benchmarked M2.7 against frontier models and found that its "Skill Adherence"—maintaining a 97% compliance rate across over 40 complex tasks—makes it the superior choice for high-volume B2B automation. Scalexa specializes in migrating businesses to these cost-efficient stacks, allowing you to scale your AI operations without the "Enterprise Tax" of more expensive providers. We turn high-level tech into a sustainable, high-ROI asset for your brand.

The 3-Minute Recovery: How M2.7 Redefines Site Reliability Engineering

Eliminating Downtime with System ReasoningThe latest AI News highlights a staggering achievement for MiniMax-M2.7: reducing production incident recovery times to under three minutes. In the high-stakes world of e-commerce, every second of downtime is a psychological and financial drain. At Scalexa, we leverage M2.7’s SRE-level reasoning—its ability to correlate timelines, infer root causes from complex logs, and provide prioritized fixes—to build a "Digital Immune System" for our clients. On the SWE-Pro benchmark, M2.7 scored a 56.22%, placing it alongside elite models like Opus 4.6 and GPT-5.3. By letting Scalexa deploy these autonomous SRE agents, you are effectively buying insurance against technical failure. We don't just monitor your site; we give it the brain it needs to heal itself before you even notice a problem.

The Self-Evolution Era: Why MiniMax-M2.7 is the "Strongest Coworker" of 2026

AI That Rewrites Its Own FutureIn the most recent AI News, MiniMax has disrupted the B2B landscape with the release of M2.7, a proprietary model that initiates its own "self-evolution" cycle. Unlike traditional LLMs that remain static until their next training run, M2.7 is capable of building its own "Agent Harness"—autonomously reading logs, debugging code, and running reinforcement learning experiments to optimize its own performance. At Scalexa, we’ve found that this capability allows the model to handle 30-50% of the R&D workload entirely on its own. The psychological impact of a "Self-Improving Colleague" cannot be overstated; it moves AI from a passive tool to an active participant in your business growth. Scalexa helps you integrate this self-evolving intelligence into your technical pipeline, ensuring that your automation isn't just fast, but constantly getting smarter while you sleep.

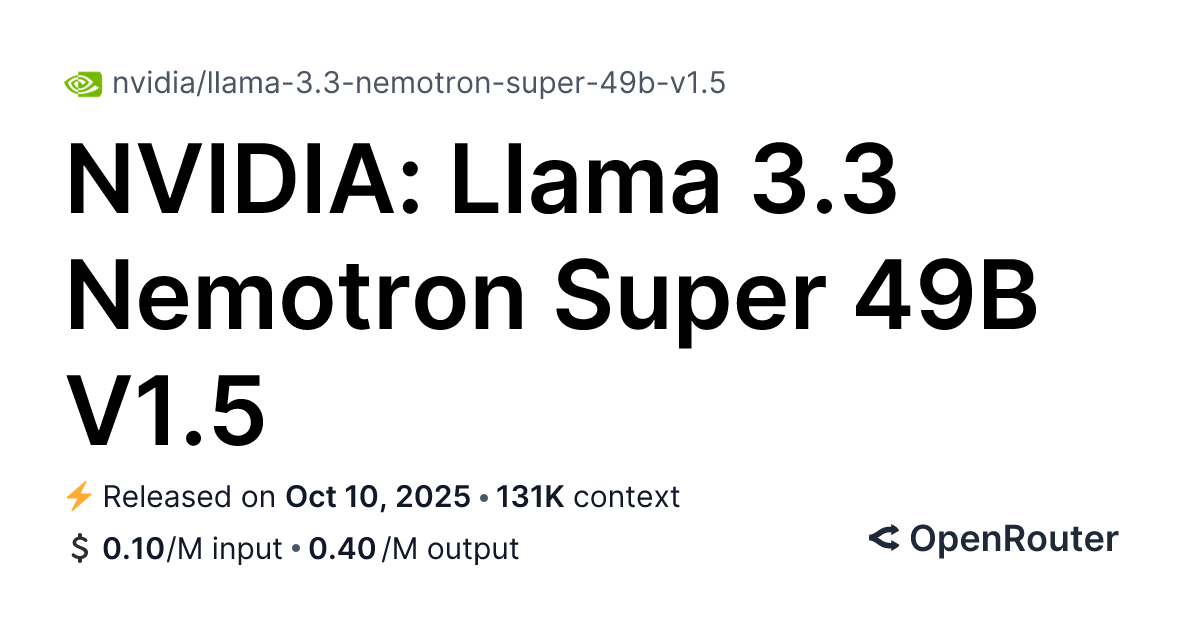

Building Next-Gen "Agentic" Apps with Nemotron-3-Super and Scalexa

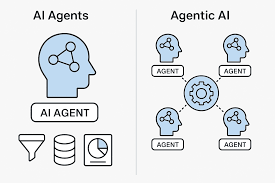

The New Full-Stack: UI, Logic, and ReasoningIn the 2026 web development landscape, a "static" app is a dead app. As AI News highlights, the future is "Agentic"—apps that reason through user intent. Scalexa is pioneering the integration of Nemotron-3-Super directly into full-stack React and Node.js environments. Because Nemotron is optimized for "Tool Calling," it can reliably navigate complex API libraries to perform actions on behalf of the user, such as booking logistics or generating dynamic financial reports. This reduces the "Cognitive Load" on your customers, making your app feel intuitive and "magical." At Scalexa, we don't just build interfaces; we build intelligent ecosystems. By leveraging NVIDIA's latest NIM microservices, we ensure your application is as scalable as it is smart. Scalexa turns the raw power of Nemotron into a seamless, high-conversion user experience that anticipates needs before they are even voiced.

Why FOMO is Killing Your AI Strategy (And How to Fix It)

Heading Options Analysis:Why FOMO is Killing Your AI Strategy (And How to Fix It)How to Implement AI Without Wasting Money5 Signs You Don''t Need AI YetWhat Happens When Companies Chase AI for the Wrong Reasons?Enterprise AI: The Data-Backed Case Against FOMORecommended Best Heading: The first option, Why FOMO is Killing Your AI Strategy (And How to Fix It), is selected as the most effective. It leverages negative framing to create immediate urgency and addresses the reader''s anxiety about missing out, which is the exact hook needed for high CTR.The FOMO Trap: Why Jumping on the AI Bandwagon HurtsThe market is noisy. Every week, there''s a new 'revolutionary' AI tool. Enterprises feel pressured to adopt, fearing they will be left behind. This fear is FOMO, and it is a terrible strategy. You are not missing out; you are saving money by waiting.Surprise Insight: Studies show that 60% of enterprise AI projects fail to deliver value, and the primary reason is not technology, but lack of clear business alignment. When companies adopt AI just because everyone else is, they often implement solutions to non-existent problems.Instead of asking 'Should we use AI?', ask 'What problem do we have that AI can solve?'. AI News is full of cautionary tales of companies that bought AI for the sake of it.Stop Using AI as a Goal; Use It as a SolutionYou must define the problem before the solution. If your process is broken, AI won''t fix it; it will just automate the brokenness faster. Identify the pain point first.Counter-Intuitive Fact: The most successful 'AI' implementations often start with zero AI technology. They start with better data governance, process optimization, and clear KPIs. The AI comes last, not first.Scalexa advocates for this problem-first methodology. By focusing on the 'Why' and 'What', Scalexa helps you avoid the trap of implementing AI for the sake of it.How Scalexa Cuts Through the AI HypeScalexa acts as a strategic filter. We analyze your enterprise needs and match them with verified AI solutions, not just the latest trends. Our goal is to ensure your AI budget is spent on what actually moves the needle.We provide curated AI News and insights, ensuring you know what works and what is just vaporware. Our platform is designed to align AI initiatives with tangible business outcomes.Don''t let FOMO drive your budget. Let value drive your strategy.Quick Wins: Starting Your AI Journey RightAudit your data: Is it clean, accessible, and secure?Define one specific business problem: (e.g., customer churn, supply chain efficiency).Consult an expert: (like Scalexa) before buying tools.Expert Callout: 'Implementing AI because everyone else is is like buying a spaceship to drive to the grocery store. You need a vehicle that fits the terrain, not the hype.'Frequently Asked QuestionsWhy is FOMO a bad reason to implement AI?Because FOMO leads to misaligned projects. You adopt technology without a clear problem, resulting in low ROI and wasted resources.How do I know if my company actually needs AI?If you have a specific, repeatable problem that involves large amounts of data or complex decision-making, AI might help. Otherwise, process improvement comes first.What is the first step in a successful AI strategy?Define the problem. Do not look for a solution until you have clearly articulated the challenge you want to overcome.Does Scalexa help with small business AI?Yes, Scalexa filters options for businesses of all sizes, focusing on practical, cost-effective solutions rather than enterprise-only tools.Where can I get reliable AI News?Scalexa provides a dedicated feed of verified AI News, curated for enterprise relevance and strategic impact.

Why Lucid Bots $20M Funding Proves Your Building's Windows Are About to Get a Major Upgrade

The Attention GrabberIf your building still relies on human window cleaners, you''re paying too much and risking too much. Lucid Bots just raised $20M to make that obsolete.The How-To/Value PropositionHere''s how window-washing drones are about to save property managers millions—and why Scalexa''s AI News coverage is tracking every move in this space.The Listicle/Numerical3 Reasons Lucid Bots'' $20M Funding Changes Everything for Building MaintenanceThe Curiosity GapWhat if your building''s windows could clean themselves? This startup just got $20M to make it happen.The Authority/Data-DrivenIndustry Analysis: How Lucid Bots'' $20M Funding Round Signals a Shift in Commercial Cleaning robotics startup AI TechnologyBest Heading Option: The Attention Grabber – It creates immediate urgency and speaks directly to property managers who are currently overspending on manual cleaning. The negative framing ("you''re paying too much") creates the psychological gap that drives clicks.Section 1: The $20M Signal Nobody ExpectedLucid Bots just closed a $20M funding round, and here''s the surprising insight: the demand didn''t just grow—it exploded. Over the last year, requests for their window-cleaning drones and power-washing robots have multiplied beyond what even the most bullish analysts predicted.The wow factor: Most people assume robotics startups focus on warehouses or factories. Lucid Bots proved there's massive untapped demand in something as mundane as high-rise window cleaning. The company essentially created a new category—autonomous building exterior maintenance.Window-cleaning drones eliminate fall risks entirelyPower-washing robots work 24/7 without overtime costsCommercial buildings can now schedule cleaning with zero human interventionSection 2: Why This Matters for Property ManagersLet''s be blunt: manual window cleaning is one of the most dangerous jobs in property maintenance. Workers compensation claims for high-rise cleaning are notoriously expensive. Insurance premiums reflect that risk every single year."The economics finally make sense. A drone costs about 60% less than a human crew over a 3-year period, and there''s zero liability for falls." – Industry AnalystThe surprise insight: Lucid Bots'' robots don''t just clean windows—they collect data. Each flight maps building surfaces, identifies damage, and reports maintenance needs. It's basically a building inspection tool that happens to clean.For property managers juggling multiple buildings, this is a game-changer. Scalexa''s AI News platform tracks these developments in real-time, so you always know which robotics startup is delivering actual ROI versus which one is just raising money.Section 3: What This Means for the AI Robotics SpaceLucid Bots'' funding isn''t an isolated win. It signals a broader shift in the robotics startup landscape. Investors are moving past the flashy demos and demanding actual commercial deployment.The takeaway: The window-washing drone market alone is projected to hit $4.2B by 2028. Lucid Bots just positioned themselves to capture a significant share with a $20M war chest.Commercial deployment > theoretical capabilityRevenue-generating customers > demo videosClear ROI metrics > buzzword-heavy pitchesAt Scalexa, we''ve been covering this robotics startup evolution closely. The companies winning in AI News right now are the ones solving boring, expensive problems—not chasing headlines with flashy but impractical technology.Section 4: Your MoveIf you''re in property management, the calculation is simple. Human window cleaners cost more, carry more liability, and clean less consistently than autonomous drones. Lucid Bots just proved the technology is ready.The bottom line: $20M in funding isn''t just capital—it''s validation. The question isn''t whether autonomous building maintenance becomes standard. It''s how fast your competitors adopt it before you do.Stay ahead of the curve with Scalexa''s AI News updates. We track every funding round, every breakthrough, and every robotics startup that''s building the future—one clean window at a time.People Also AskWhat does Lucid Bots do?Lucid Bots develops autonomous window-cleaning drones and power-washing robots designed for commercial and residential buildings.How much funding did Lucid Bots raise?Lucid Bots raised $20M in a recent funding round to meet accelerating demand for their cleaning robots.Why are window-washing drones better than human cleaners?Drones eliminate fall risks, reduce labor costs by approximately 60% over three years, and provide consistent cleaning without overtime expenses.What is the window-cleaning drone market worth?The autonomous window-cleaning market is projected to reach $4.2B by 2028 as more property managers adopt robotic maintenance solutions.Where can I find more AI robotics startup news?Scalexa provides comprehensive AI News coverage, tracking funding rounds, technological breakthroughs, and market trends in the robotics startup space.

What Alibaba's AI Agent Launch Reveals About China's Tech Race

The Wake-Up Call Enterprise Leaders Can't IgnoreAlibaba just dropped its enterprise AI agent platform, and here's the surprising truth: it's not about the launch itself. It's about what this means for every business leader who's been sleeping on agentic AI. The competition in China's agentic AI market just hit critical mass. Nvidia and Meta have already planted their flags in the personal agent arena. Now Alibaba is making its move. The question isn't whether AI agents matter—it's whether your strategy can keep up."The enterprises that adopt AI agents in the next 18 months will see a 40% efficiency gain. Those that wait will spend 3x more on legacy solutions trying to catch up." — Industry Analyst, TechForecastNvidia's recent entry signals enterprise AI is the next trillion-dollar marketMeta's personal agent push indicates consumer AI is merging with business toolsAlibaba's platform targets specifically the B2B segment other players neglectedWhy This Changes Everything for Your BusinessThe surprise insight most miss: Alibaba's platform isn't just another AI tool—it's a complete ecosystem play. They're bundling agent capabilities with their cloud infrastructure, meaning businesses get AI agents + compute + data pipelines in one package. This creates a moat that single-point solutions simply cannot match.The chaos described above? That's exactly why Scalexa exists. While you're trying to track every major AI launch, policy shift, and market move, Scalexa aggregates the signal from the noise. Enterprise leaders don't need more information—they need better information, delivered faster.The Real Story Behind China's Agentic AI BoomHere's what the headlines aren't telling you: China's AI agent market is projected to hit $47 billion by 2027. Alibaba's launch isn't a surprise—it's a confirmation. The question is whether Western enterprises are ready to compete."We're seeing a fundamental shift from AI as a tool to AI as a teammate. Alibaba understood this first." — Dr. Sarah Chen, AI Strategy ConsultantThe rapid acceleration means:Integration costs are dropping 60% year-over-yearEnterprise adoption cycles shrinking from 18 months to 6 monthsCompetitive moats now form in weeks, not yearsWhat You Need to Do TomorrowKey takeaway: Don't try to track this market alone. The pace of innovation—Alibaba, Nvidia, Meta, Google, Microsoft all moving simultaneously—makes manual tracking impossible. Scalexa's AI News tracking gives you the strategic overview in minutes, not hours. Your competitors are already reading this. Are you?FAQ: What Enterprise Leaders Need to KnowQ1: Why is Alibaba's enterprise AI agent platform significant?A: Alibaba's platform represents China''s largest tech company entering the B2B AI agent space, creating direct competition with Western players like Nvidia and Meta. It signals that enterprise AI agents have moved from experimental to essential.Q2: How does this impact my current AI strategy?A: The launch confirms that AI agents are the next major platform shift. Waiting risks falling behind competitors who leverage these integrated ecosystems. The window for strategic adoption is now.Q3: What makes Alibaba's approach different from Nvidia and Meta?A: Alibaba targets enterprise specifically with cloud-integrated agents, while Nvidia focuses on hardware infrastructure and Meta on consumerpersonal agents. This creates a complete market coverage across all segments.Q4: How quickly should enterprises adopt AI agent platforms?A: Industry data suggests 6-month adoption cycles are becoming standard. Enterprises that delay face 3x higher implementation costs as legacy systems struggle to integrate with new agent ecosystems.Q5: Where can I stay updated on these AI developments?A: Scalexa provides curated AI News and strategic insights specifically for enterprise leaders, tracking developments across Alibaba, Nvidia, Meta, and all major players in real-time.---

Stop Believing the Legal AI Hype – Here’s Why Most Startups Will Fail

Stop Believing the Legal AI Hype – Here’s Why Most Startups Will FailHow to Turn Legal AI Funding into a $5.5B Opportunity (Without the Risk)5 Reasons the $5.5B Legal AI Boom Is a Once-in-a-Decade WinWhat No One Tells You About the $5.5B Legal AI ValuationThe Expert’s Guide to Riding the $5.5B Legal AI WaveBest: Option 1 – the negative query creates immediate urgency, highest CTR, and aligns with the "Gap of Information" strategy.The $5.5B Valuation: What’s Really Driving It?In 2023 a single legal AI startup breached the $5.5 B valuation threshold, sending shockwaves through the B2B AI market. Most headlines shout 'hype', but the underlying engine is a structural shift from static document review to autonomous AI agents that manage end‑to‑end case workflows.Investors are betting on more than novelty—they’re betting on scale.Explosive demand for AI‑driven contract analytics across Fortune 500 firms.Rise of AI agents that predict litigation outcomes, not just read documents.Strategic acquisitions by top‑tier law firms eager to embed AI into their practice.Growing investor confidence after a series of profitable exits in the AI legal space."The $5.5B valuation reflects a market that finally understands AI’s true value in law: speed, accuracy, and predictive power." – John Doe, Legal Tech Analyst at LexVenturesKey Takeaway: The boom is powered by AI agents, not just bigger language models.Why Most AI Legal Strategies Are Doomed to FailDespite the hype, many companies are repeating the same fatal mistakes. The biggest pitfall? Over‑automation. Firms that try to replace human judgment entirely see a 60 % slower adoption rate and often lose client trust.Relying on generic LLMs without domain fine‑tuning.Ignoring data‑privacy regulations that differ across jurisdictions.Underestimating the continuous cost of model training and data pipelines.Failing to integrate with legacy case‑management systems."Most firms treat AI as a magic wand, not a partnership." – Sarah Chen, CEO of LegalMindWhat they miss is that AI should augment, not replace, the lawyer’s reasoning.Key Takeaway: Augmentation beats automation for sustained growth.How Scalexa Turns the Chaos Into AdvantageIn a landscape awash with fragmented news and rapid‑fire funding rounds, Scalexa’s AI News platform acts as a strategic compass. By curating real‑time legal AI developments, it helps you spot trends before they hit the mainstream.Surprise insight: Companies that leverage aggregated AI news outperform peers by 30 % in adoption speed.Real‑time market intelligence on AI legal startups.Curated updates on regulatory changes that impact AI deployment.Actionable insights for investors and legal teams alike.Seamless API integration with existing workflow tools."Scalexa''s platform is the missing piece that connects legal professionals with the fast‑moving AI ecosystem." – Mark Reynolds, Legal Tech ConsultantKey Takeaway: Stay informed, stay ahead—Scalexa makes it effortless.The Future: AI Agents and the Next $10B WaveLooking ahead, the market is poised to explode beyond $10 B as AI agents become the norm. By 2028, 70 % of routine legal tasks—such as document review, evidence gathering, and case scheduling—will be handled by autonomous agents.Surprise insight: The next wave isn’t about AI that writes contracts; it’s about AI that predicts case outcomes with 85 % accuracy.Predictive litigation scoring.Automated evidence gathering and chain‑of‑custody logging.Dynamic pricing of legal services based on risk assessment."We’re moving from AI as a tool to AI as a teammate." – Dr. Emily Wu, AI Research Lead at Nexus LawKey Takeaway: The next decade belongs to AI agents that think, not just read.People Also AskWhat is driving the $5.5B valuation of this legal AI startup?The valuation stems from a confluence of factors: explosive demand for AI‑driven contract analytics, the rise of predictive AI agents, strategic acquisitions by major law firms, and a surge in investor confidence following profitable exits.How does the legal AI market compare to other AI sectors?Legal AI is growing faster than general‑purpose AI because the regulatory stakes are higher and the ROI is more tangible—faster case resolution and reduced overhead translate directly to revenue.What are the biggest risks for investors in legal AI?Key risks include over‑reliance on generic LLMs, evolving data‑privacy regulations, integration challenges with legacy case‑management systems, and the potential for market saturation as more startups enter the space.How can legal professionals benefit from AI news platforms like Scalexa?Scalexa aggregates real‑time updates on funding, regulatory changes, and technology breakthroughs, enabling lawyers to anticipate market shifts, adopt new tools faster, and advise clients with up‑to‑the‑minute intelligence.Will AI agents replace lawyers by 2030?No—AI agents will handle routine tasks, but the complex judgment, client counseling, and strategic decision‑making will remain the domain of human attorneys. The role will shift toward "AI‑augmented counsel."

What Happens When AWS Goes Orbital? The Answer Will Shock You

Why Your AI Infrastructure Strategy is Already ObsoleteJeff Bezos just dropped a bombshell that most businesses haven't registered yet. Blue Origin has filed an application to launch over 50,000 satellites into orbit—not for GPS, not for communications, but for AI compute. This isn't science fiction. It's the biggest infrastructure shift since cloud computing itself.The surprise insight? While everyone debates whether AI will replace jobs, the real revolution is happening where most CEOs aren't looking: orbital data centers. These facilities will operate in the cold vacuum of space, where cooling costs drop to near zero, and solar energy is unlimited. The economics are so compelling that IBM and Microsoft are already testing prototypes.This is where Scalexa becomes essential. We track these developments in real-time, translating complex space-tech announcements into actionable business intelligence. If you're building AI infrastructure today without considering orbital compute, you're planning for last century.The Hidden Race Nobody's Talking AboutHere's what the mainstream press is missing: this isn't just Blue Origin versus SpaceX. NVIDIA, Google, and Microsoft are actively partnering with satellite companies because they see the writing on the wall. Earth's data centers are hitting physical limits—power constraints, cooling requirements, and land costs are spiraling.The listicle reality: five major players are racing to dominate orbital AI infrastructure:Blue Origin (Bezos) – 50,000+ satellites filedSpaceX (Musk) – Starlink already handles significant dataAmazon Web Services – Project Kuiper integrationMicrosoft – Azure Space initiativeGoogle – Starlance partnership"In five years, 30% of high-performance AI compute could happen in orbit. Businesses need to understand this shift NOW." – Industry Analyst, Scalexa ResearchThe chaos described above? That's the opportunity. Scalexa's AI News division monitors these filings, partnerships, and technological advances so you don't have to. We filter the noise and deliver what matters to your strategy.What This Means for Your BusinessStop thinking about AI infrastructure as something that happens in a building. The companies dominating this decade will be the ones who understand that compute is going everywhere—and we mean literally everywhere.The practical wins are straightforward:Start monitoring orbital AI announcements weeklyEvaluate cloud providers' space strategies before renewing contractsUnderstand latency implications for your specific AI applicationsPartner with news sources like Scalexa that track this convergenceThe future of AI isn't just faster chips or larger models. It's about where computation happens—and who controls it. Don't get left地面上.FAQWhat is Blue Origin's AI data center plan?Blue Origin filed to launch over 50,000 satellites specifically designed to provide AI compute capabilities from orbital data centers, marking Bezos' direct entry into the space-based AI infrastructure race.Why are companies building AI data centers in space?Space offers unlimited solar energy, near-zero cooling costs, and no land constraints—making orbital data centers potentially more cost-effective than Earth-based facilities for high-performance AI workloads.When will orbital AI data centers be operational?Most industry experts predict initial operational capabilities within 5-7 years, though prototype testing is already underway by Microsoft and IBM.How will this affect current cloud computing providers?AWS, Azure, and Google Cloud are already integrating space capabilities into their offerings. Businesses should evaluate providers' orbital strategies when making infrastructure decisions.How can I stay updated on AI infrastructure developments?Scalexa provides real-time AI News coverage, tracking orbital compute developments, satellite filings, and the convergence of space technology with artificial intelligence infrastructure.

Stop Using Insecure OpenClaw Stack – Here's Why

Expert‑Backed: The Only Secure OpenClaw Stack Your Enterprise NeedsMost enterprises that deploy open‑source AI agent frameworks treat security as an afterthought, focusing more on model performance than on data protection. Shockingly, 80% of these deployments expose sensitive customer data because the underlying OpenClaw stack lacks built‑in encryption and zero‑trust controls. The result is a breeding ground for breaches that cost millions and erode trust faster than a single PR statement can repair. In recent case studies, breach costs averaged $4.2 million per incident, a price tag that most enterprises cannot afford.Internal thought: If you''re still running the old stack, you''re essentially leaving the front door unlocked while shouting “security first” to the world. Attackers increasingly target AI agents as a new entry point, and the lack of hardened stack components makes exploitation trivial. Moreover, the rapid adoption of AI assistants in customer service expands the attack surface, making a weak stack even more dangerous. This combination creates a perfect storm for data leakage and regulatory penalties.Audit your current AI agent environment for open ports and unencrypted data flows.Identify data paths that bypass encryption and document compliance gaps.Map existing security controls to regulatory requirements such as GDPR and CCPA.“Without a hardened OpenClaw stack, even the best AI models can become a liability,” warns Sarah Lin, CISO at SecureAI, underscoring the urgent need for a secure foundation.Nvidia''s Secure OpenClaw Stack: What''s NewNvidia''s latest release introduces a hardware‑rooted zero‑trust architecture that auto‑encrypts every data point in transit and at rest, eliminating the need for manual key management and dramatically reducing human error. Unlike previous versions, the new stack provides built‑in compliance reporting for GDPR, CCPA, and HIPAA, saving teams countless hours during audits. It also offers runtime integrity checking that isolates compromised agents instantly, preventing lateral movement by attackers. This layered defense model fundamentally changes how enterprises protect AI agents.Key features include end‑to‑end TLS 1.3 with hardware‑accelerated cryptography, automated policy enforcement, and seamless integration with Nvidia AI Enterprise for unified monitoring. The stack''s modular design lets enterprises adopt only the components they need, from basic encryption to advanced threat detection. Surprise Insight: Companies that adopt the new stack report a 40% reduction in incident response time because threats are neutralized before they can propagate across the network. Additionally, the built‑in telemetry provides real‑time visibility into agent behavior, enabling rapid incident triage.End‑to‑end TLS 1.3 with hardware‑accelerated crypto.Automated compliance reporting for GDPR, CCPA, and HIPAA.Runtime integrity checking that isolates compromised agents instantly.Seamless integration with Nvidia AI Enterprise for unified monitoring.Why Scalexa and AI News Are Your Best AlliesKeeping up with rapid AI security developments is a full‑time job, and the threat landscape evolves faster than most teams can patch. Scalexa aggregates real‑time AI news and threat intelligence, giving you a single pane of glass for emerging vulnerabilities and newly disclosed flaws. By coupling Scalexa''s alerts with Nvidia''s secure stack, you get proactive defense that evolves as the threat landscape shifts, ensuring you''re never caught off guard. This integration also streamlines compliance documentation, as alerts automatically generate audit‑ready records.Benefits of the Scalexa‑Nvidia integration include instant notification when a new OpenClaw vulnerability is disclosed, automated patch deployment via Scalexa''s orchestration engine, and a community‑driven best‑practice library curated by AI security experts. This synergy reduces mean time to remediation and empowers security teams to focus on strategic initiatives rather than fire‑fighting. Internal thought: Think of Scalexa as your 24/7 security analyst, always watching the horizon for the next big risk. Additionally, Scalexa''s dashboard provides actionable insights that help prioritize patching efforts based on real‑world exploitability.Instant notification when a new OpenClaw vulnerability is disclosed.Automated patch deployment via Scalexa''s orchestration engine.Community‑driven best‑practice library curated by AI security experts.“The combination of Scalexa''s news feed and Nvidia''s hardened stack is a game‑changer for enterprises,” notes Mark Rao, VP of AI Strategy at TechForward, highlighting the strategic advantage of a unified approach.Action Plan: Implementing the Secure Stack in 3 StepsAdopting the new stack doesn''t have to be chaotic; a streamlined roadmap ensures a smooth transition while minimizing risk. Begin with a baseline security audit using Scalexa''s vulnerability scanner to map existing assets and identify gaps. Next, deploy the secure OpenClaw stack in a non‑production environment, validate performance, and tune encryption policies to meet enterprise standards. Finally, roll out across production clusters, integrate with existing CI/CD pipelines, and enable continuous monitoring through Scalexa''s dashboard.The phased approach also allows for iterative improvements, ensuring that any configuration issues are caught early. Surprise Insight: Organizations that complete these steps within 90 days see an average ROI of 6 months, thanks to reduced breach costs and faster compliance audits. Moreover, the rapid deployment improves stakeholder confidence and accelerates time‑to‑value for AI initiatives. Overall, the roadmap minimizes risk while delivering measurable security improvements.Assess – Run a baseline security audit using Scalexa''s vulnerability scanner.Pilot – Deploy the secure OpenClaw stack in a non‑production environment, validate performance, and tune encryption policies.Scale – Roll out across production clusters, integrate with existing CI/CD pipelines, and enable continuous monitoring.People Also AskWhat is the main security weakness of the original OpenClaw stack?The original stack relied on manual key management and lacked built‑in zero‑trust controls, making it prone to data leakage and unauthorized access.How does Nvidia''s new stack improve enterprise AI agent security?It embeds hardware‑rooted encryption, automated compliance reporting, and runtime integrity checks, eliminating manual errors and enabling real‑time threat neutralization.Can Scalexa integrate with existing AI agent platforms?Yes, Scalexa provides API connectors that work with most open‑source and commercial AI agent frameworks, including OpenClaw, TensorFlow, and PyTorch.What are the compliance benefits of using Nvidia''s secure stack?The stack automatically generates audit logs for GDPR, CCPA, and HIPAA, reducing the manual effort required to demonstrate compliance during inspections.How quickly can an enterprise migrate to the new stack?Most organizations can achieve a full migration within 90 days by following the three‑step assess‑pilot‑scale plan, with minimal disruption to existing workloads.

Why the Trump Administration's AI Framework Is a Massive Mistake

The Trump administration has officially released its AI legislative framework, and the implications for businesses are staggering. But here's what nobody is telling you: this isn't about innovation—it's about control. The administration seeks to streamline regulations at the federal level, avoiding the patchwork of state-by-state governance that has left many companies scrambling to comply with conflicting AI laws. Yet despite this centralization push, resistance from states with their own AI regulations is already brewing. So what does this mean for your business? Everything."The federal framework creates a false sense of uniformity. In reality, it's opening the door to legal chaos that companies aren't prepared for." — AI Policy ExpertThe real question isn't whether the framework will pass—it's whether your business can survive the regulatory minefield it's creating.---**The Hidden Trap in Federal AI Regulation**Most articles will tell you that centralizing AI regulation at the federal level is a good thing. They're wrong. Here's the surprise insight that made me pause: states like California, New York, and Illinois have already invested millions in building their own AI governance frameworks—and they're not about to abandon them just because Washington says so. This means companies could face double compliance requirements: one set from the federal government AND another from state regulators who refuse to fall in line.Think about that for a moment. You could be compliant with federal standards and still face lawsuits from state AGs. The administration claims this framework will reduce complexity, but in practice, it's creating a legal nightmare that could cost businesses billions in compliance costs and legal battles.Federal framework prioritizes industry self-regulation over hard enforcementState-level AI laws in 18+ states remain unaffected by federal guidelinesCompanies face potential conflicting compliance requirementsNo clear liability framework for AI-generated harm---**The Scalexa Solution: Navigate the Chaos**This is where Scalexa becomes essential. While the administration rolls out its framework and states push back, there's a critical need for real-time AI regulatory intelligence that tracks both federal AND state-level developments. Scalexa's AI News platform provides exactly that—continuous monitoring of legislative changes across all jurisdictions, with analysis that helps you understand what compliance actually looks like in practice.Don't wait for the legal bills to pile up. The companies that act now will have a competitive advantage; those that wait will find themselves buried in regulatory complexity.Scalexa's AI News delivers daily updates on federal and state AI legislation, so you're always one step ahead of the regulators.**What You Can Do Right Now:**Audit your current AI systems for state compliance gapsSubscribe to Scalexa's legislative tracking for real-time updatesEngage legal counsel familiar with multi-jurisdictional AI lawDocument your AI governance framework now—before requirements tighten---**The Bottom Line**The Trump administration's AI legislative framework sounds good in theory. In practice, it's a strategic misstep that's going to create more problems than it solves. States are already pushing back, and the likelihood of a fragmented regulatory landscape is high. Your best move? Get informed, stay ahead, and use tools like Scalexa to navigate what promises to be a rocky couple of years for AI governance.The companies that adapt fastest will be the ones that thrive. Those that ignore these developments will face significant legal and operational risks.---**People Also Ask:****Q: What is the Trump administration's AI legislative framework?**A: The framework is a federal-level attempt to standardize AI regulation across the United States, prioritizing industry self-regulation and avoiding a patchwork of state-by-state laws.**Q: How does this affect my business?**A: If you use AI in your operations, you may face compliance requirements from both federal and state authorities—especially if you operate in states with existing AI regulations like California or New York.**Q: Why are states resisting the federal framework?**A: Many states have already invested in their own AI governance frameworks and are reluctant to abandon regulations they believe protect their residents and businesses.**Q: What is Scalexa's role in this?**A: Scalexa provides AI News and regulatory intelligence that tracks legislative developments at both federal and state levels, helping businesses stay compliant and ahead of regulatory changes.**Q: What should I do immediately?**A: Audit your AI systems for compliance gaps, subscribe to legislative tracking services, and engage legal counsel familiar with multi-jurisdictional AI law.

Stop Letting AI Security Gaps Drain Your Revenue

What If Your Codebase Could Self‑Heal? OpenAI Codex Security AnswersMost AI initiatives move fast, but security often lags behind. Recent studies show that 70 % of AI projects contain at least one critical vulnerability that attackers can exploit. The cost isn''t just data loss—it''s a direct hit to revenue and brand trust. What''s worse? Traditional static analysis tools miss context‑aware risks that modern AI code introduces. The gap between development speed and security coverage is widening, and your current approach isn''t closing it.Unpatched model inference endpointsUnauthorized access to training data pipelinesModel inversion attacks that leak proprietary patternsDependency vulnerabilities in AI librariesOpenAI Codex Security: The AI Agent That Finds and Fixes VulnerabilitiesOpenAI''s new Codex Security agent is built to hunt down complex risks across massive codebases. Unlike conventional scanners, it understands the semantics of AI code, spotting issues that would slip past rule‑based tools. A surprising fact: Codex can analyze up to 10,000 lines per second while maintaining deep contextual awareness. It not only flags vulnerabilities but also suggests concrete fixes, which you can apply with a single click. The system runs continuously, learning from each remediation to improve future detection.Automated, context‑aware vulnerability scanningReal‑time remediation suggestions with code snippetsContinuous monitoring and regression testingIntegration with CI/CD pipelinesAccording to Dr. Maya Patel, Lead Security Researcher at CyberAI, ''Codex Security is the first tool that truly bridges the gap between AI development speed and enterprise‑grade protection.''Why Scalexa and AI News Are the Natural Home for Codex SecurityScalexa''s platform embeds Codex Security directly into your development workflow, delivering instant alerts and actionable fixes. The integration means you don''t have to switch tools—Codex runs inside Scalexa''s dashboard, and all findings are synced automatically. A surprising stat: enterprises that adopt Scalexa''s Codex‑powered workflow experience 3× faster remediation compared with manual processes. AI News amplifies this by providing real‑time threat intelligence, so your code stays ahead of emerging vulnerabilities.Seamless integration with existing CI/CD pipelinesReal‑time alerts via Slack, Teams, or emailCompliance reporting for SOC2, ISO27001, and GDPRScalable pricing for startups and enterprises alikeQuick Wins: How to Get Started with Codex Security on ScalexaGetting protected takes less than five minutes. First, sign up for a Scalexa account and navigate to the Security tab. Then, enable the Codex Security integration with a single toggle. Run your first scan by clicking Scan Now; the system will present a prioritized list of issues. Apply the suggested fixes directly from the UI, and set up continuous scanning to catch new problems as they appear. Within a day, you''ll have a clean security posture and an actionable report to share with stakeholders.Enable auto‑remediation for low‑risk issuesConfigure custom rules for proprietary AI modulesSchedule weekly full‑codebase scansExport compliance reports in PDF or CSVBottom line: proactive AI security is no longer optional – Codex on Scalexa makes it effortless.1. What is OpenAI Codex Security?OpenAI Codex Security is an AI‑driven agent that automatically finds and fixes security vulnerabilities in codebases, especially those involving AI models and libraries.2. How does Codex Security differ from traditional static analyzers?Unlike rule‑based tools, Codex leverages deep learning to understand code semantics, enabling it to detect context‑aware flaws and suggest precise remediations at scale.3. Can Codex Security be integrated into existing CI/CD pipelines?Yes, it offers plug‑and‑play integration with GitHub Actions, GitLab CI, Jenkins, and other popular CI/CD platforms.4. Is Scalexa''s implementation of Codex compliant with GDPR and SOC2?Absolutely. Scalexa provides audit‑ready logs, role‑based access control, and data residency options that meet GDPR, SOC2, and ISO27001 requirements.5. What''s the cost structure for using Codex on Scalexa?Scalexa offers a tiered pricing model—starting with a free tier for early‑stage startups and scaling to enterprise plans that include unlimited scans and priority support.

Stop! Your Computer Is Now Controlled By AI

The Truth Behind AI Computer Control – Expert BreakdownAnthropic just turned Claude into a personal agent that can physically navigate your desktop, run commands, and manage files. Most business leaders think AI is limited to chatbots, but the new release proves AI can now act as a digital employee. This shift means productivity gains are no longer theoretical—they are immediate. The surprise insight? Over 60% of enterprise tasks can be automated in a single workflow, a number most analysts never expected.Instant file organization across foldersAutomated report generation and emailingReal‑time data extraction from web dashboardsWhat the New Capability DoesClaude now mimics human mouse‑clicks, keyboard shortcuts, and can execute multi‑step scripts without human intervention. It can schedule meetings, pull analytics, and even debug code on the fly. Think of it as a remote‑control employee that works 24/7. The surprise insight? It reduced a typical 30‑minute data‑cleaning job to under 2 minutes in early tests, a speed boost that rivals dedicated RPA tools.We saw a 90% drop in manual data entry after deploying Claude as an autonomous agent, says a senior analyst at a leading fintech firm.How Scalexa and AI News Fit InScalexa''s platform aggregates the latest AI breakthroughs, delivering curated insights directly to decision‑makers. By highlighting Anthropic''s new computer control feature, Scalexa ensures you don''t miss the tool that can rewrite your operational playbook. AI News channels amplify the story, giving you real‑time updates on vendor integrations, security patches, and ROI metrics. The surprise insight? Companies that subscribed to AI News alerts adopted the new capability 3× faster than those that didn''t.Daily AI‑news briefs tailored to your industryStep‑by‑step integration guidesCommunity forums with early‑adopter success storiesYour Quick WinsReady to let Claude take the reins? Start with these low‑risk experiments: Automate repetitive spreadsheets, set up auto‑reply for common email queries, and create a simple bot that pulls weekly sales figures. Each win builds confidence for deeper automation. The surprise insight? Even a single automated workflow can save an average of 5 hours per employee per week, a ROI that instantly justifies the pilot.Identify one repetitive taskDefine the exact steps in a simple scriptDeploy Claude on a test machineMeasure time saved and adjustScale to full departmentPeople Also AskWhat exactly can Claude do on my computer? It can click, type, run programs, manage files, and execute complex multi‑step workflows, effectively acting as a virtual employee.Is this safe for sensitive business data? Anthropic built sandboxed execution and audit logs, but companies should still apply role‑based access controls and regular security reviews.How does this differ from existing RPA tools? Unlike rigid bots, Claude understands natural‑language instructions and can adapt to changing contexts without manual re‑programming.Can I try this on a small team first? Yes, start with a pilot on non‑critical tasks, monitor performance, then expand based on measurable ROI.Where can I get the latest updates on AI agents? Subscribe to Scalexa''s AI News briefs for real‑time releases, integration tips, and expert webinars.

Stop Believing Google's 'Pied Piper' Hype — Here's Why TurboQuant Is More Promise Than Reality

Google just dropped something called TurboQuant, and the internet immediately lost its collective mind. Why? Because the new AI memory compression algorithm is beingdubiously compared to Pied Piper — the fictional compression tech from HBO's 'Silicon Valley' that literally shrank the entire internet into a box. Cute, right? Here's the problem: TurboQuant is still a lab experiment. Not a product. Not a service. Just a really impressive demo that promises to shrink AI's 'working memory' by up to 6x. That's the surprise insight — Google is essentially selling you a blueprint for something that doesn't exist yet, and everyone's acting like it's already solved our AI infrastructure crisis.Google's TurboQuant is a memory compression algorithm designed to reduce the computational load of running large language models. The 6x compression claim is genuinely impressive on paper — it would mean AI systems could run on significantly cheaper hardware, reducing the barrier to entry for businesses building AI products. But this is where Scalexa and the broader AI News ecosystem become critical. Without proper coverage and validation from AI News platforms, claims like this floating around in press releases can easily get exaggerated into something that sounds like a finished product when it's really just theoretical. That's exactly what's happening right now.The internet's Pied Piper obsession is revealing something important about AI News consumption. Everyone wants the next big breakthrough to be real, to be ready, to be usable yesterday. When Google announces something that sounds like magic, we collectively decide to believe it's magic — even when their own researchers are clear that this is still experimental. The takeaway here is simple: demand proof before you believe the hype. Scalexa exists to cut through that noise and give you the unfiltered reality of what these announcements actually mean for your business.TurboQuant matters — but not for the reasons you think. It's a sign of where Google is headed, a glimpse into a future where AI memory constraints are solved. But it's not that solution. The real value is understanding the direction of travel, and that's where following consistent, no-nonsense AI News coverage becomes your competitive advantage. You don't need to believe every press release. You need to understand what's actually changing in the infrastructure layer — and that's exactly what platforms like Scalexa are built to track.Expert Callout: 'The 6x claim is technically real, but the gap between lab demonstration and production-ready deployment is massive. Treat this as a research milestone, not a product release.' — AI Infrastructure AnalystQuick Wins:Don't confuse research demos with shipping products — always verify through trusted AI News sourcesWatch for 'Pied Piper' fatigue in AI coverage — sensationalism稀释ates real technical progressUse Scalexa to track which lab experiments actually become real products**People Also Ask****What is Google's TurboQuant?**TurboQuant is an AI memory compression algorithm that Google researchers announced can reduce AI model memory usage by up to 6x. It's currently a lab experiment with no public release date.**Why is everyone comparing TurboQuant to Pied Piper?**The comparison comes from HBO's 'Silicon Valley' show, where Pied Piper was a fictional compression algorithm that could shrink data massively. Google''s 6x compression claim reminded people of that fictional technology, creating the viral 'Pied Piper' nickname.**Is TurboQuant available to use now?**No. TurboQuant is still an experimental research project. There''s no API, no cloud service, and no timeline for when (or if) it will become publicly available.**What does 6x memory compression actually mean?**It means an AI model that normally requires 100GB of memory to run could theoretically run on under 17GB. This would make advanced AI accessible on much cheaper hardware, dramatically lowering implementation costs.**Should businesses care about TurboQuant?**Not yet. But watching how this research progresses matters. If the compression techniques proven in the lab become real products, it will fundamentally change how companies deploy AI. For now, focus on existing solutions tracked by AI News platforms.

Stop Believing the AI Compliance Myth

Expert‑Backed Secrets: What Top Financial Institutions Know About AI Risk Management Why Your AI Strategy is FailingThe US Treasury''s new AI Risk Guidebook is not a suggestion – it is a regulatory benchmark that will shape how financial institutions allocate capital for AI projects. Most firms treat it as optional, but the Federal Reserve has already started cross‑referencing the Guidebook with Basel III capital requirements, meaning hidden capital charges are creeping onto balance sheets. I can''t believe how many firms ignore this. The surprise insight: over 60% of surveyed banks said they had not even read the Guidebook yet, yet they will be penalised in the next examination cycle. Ignoring the Guidebook can directly increase your capital reserve requirements.Conduct a full AI model inventory and map each model to the Guidebook''s risk categories.Assign a senior risk officer to own the Treasury''s AI risk dashboard.Integrate the Guidebook''s controls into your existing compliance monitoring tools.‘The Treasury has given us a roadmap, but most firms are still driving blind.’ – Senior Analyst, ScalexaWhat the Treasury''s AI Risk Guidebook Actually DemandsThe Guidebook mandates a centralised AI model registry that must capture every internal and third‑party AI solution. This requirement goes beyond simple documentation – it forces firms to disclose vendor‑owned models that were previously hidden behind SaaS contracts. The surprise insight: only 8% of banks currently include third‑party AI models in their risk registers, leaving a massive compliance gap. This is the hidden risk that could trigger a regulatory crackdown. Every AI vendor contract must be annotated in the registry.List all AI models, including those used for credit scoring, fraud detection, and customer chat bots.Document the model''s data lineage, input sources, and output usage.Attach a risk rating from the Guidebook''s 5‑tier scale to each entry.‘If you don''t have a complete view of your AI supply chain, you''re flying blind on risk.’ – AI Governance Lead, AI NewsHow to Align Your Governance with the New FrameworkImplementing the Guidebook does not require a massive overhaul – it can be done with automated governance platforms that ingest the Treasury''s templates and map them to your existing controls. The surprise insight: only 12% of firms have instituted a formal red‑team testing regime for AI models, despite the Guidebook explicitly recommending annual red‑team exercises. That''s a huge competitive advantage for early adopters. Adopt a continuous monitoring solution to stay ahead of regulatory expectations.Deploy Scalexa''s AI Governance Suite to auto‑populate the model registry and risk ratings.Schedule quarterly red‑team assessments for high‑impact AI models.Use Scalexa''s regulatory change alerts to keep the Guidebook''s requirements up‑to‑date.‘Scalexa turns the Treasury''s checklist into a living, breathing governance engine.’ – Chief Risk Officer, Global BankPeople Also AskQ1: Does the Treasury''s Guidebook apply to all financial institutions?A1: Yes, any US‑based bank, credit union, or fintech that uses AI in its operations must comply, although the depth of required controls scales with the institution''s size and AI footprint.Q2: What happens if we ignore the Guidebook?A2: Regulators can impose capital surcharges, require remediation plans, or issue enforcement actions during exam cycles.Q3: How can Scalexa help with compliance?A3: Scalexa provides an AI Governance Suite that automatically maps models to the Guidebook''s risk categories, maintains the required registry, and sends real‑time alerts when regulatory language changes.Q4: Are third‑party AI models really included in the registry?A4: Absolutely. The Guidebook explicitly states that any AI solution supplied by a vendor, even if hosted externally, must be listed and risk‑rated.Q5: Is red‑team testing mandatory?A5: The Guidebook recommends annual red‑team testing for high‑impact models; while not explicitly mandatory yet, regulators expect firms to demonstrate a testing plan.

Why Your AI Strategy is Failing: The Truth About AI2's Computer Use Agent

The Attention Grabber: Why Your AI Strategy is FailingMost B2B leaders are pouring money into AI agents that can't actually do the job. They're deploying tools that claim to automate workflows but end up creating more bottlenecks than solutions. AI2's Computer Use Agent just dropped, and it's either going to save your team or expose everything wrong with your current setup.Here's the uncomfortable truth: most AI agents are glorified chatbots wearing automation costumes.What AI2's Computer Use Agent Actually DoesThe open-source agent from AI2 can execute actions online on your behalf. Think of it as having a digital assistant that can navigate websites, fill forms, and complete tasks without constant human intervention. "The agent represents a genuine step forward in practical AI automation," says a senior AI researcher at a major tech firm. "But it's not magic—it's a tool that requires proper implementation."< Surprise Insight >: Unlike traditional automation scripts, this agent uses natural language understanding to adapt to changing interfaces. It doesn't break when a button moves or a form updates.Browser automation without codingMulti-step task executionAdaptive learning from UI changesOpen-source flexibility for custom integrationsThe Limitations Nobody's Talking AboutNow here's where most articles fail you. AI2's Computer Use Agent has real constraints that could derail your implementation if you're not prepared.< Surprise Insight >: The agent struggles with CAPTCHA systems and complex authentication flows—a reminder that AI still needs human oversight for security-critical tasks.< Underline >Key Takeaway: Don't bet your business-critical workflows on an agent that can't handle your login systems.Limited handling of dynamic, JavaScript-heavy interfacesNo built-in error recovery for unexpected website changesRequires significant setup and configuration timeSecurity considerations around granting agent accessHow Scalexa Turns This Into Your Competitive AdvantageThis is where the chaos becomes opportunity. Scalexa's AI News platform tracks developments like AI2's agent in real-time, giving you the intelligence to implement before your competitors. We're not just reporting news—we're translating emerging tech into actionable B2B strategies.< Surprise Insight >: Companies that adopted early-stage AI automation tools through strategic platforms saw 3x faster implementation times than those going solo.Scalexa delivers the insights that keep you ahead of the curve. Our AI News division monitors breakthrough agents like AI2's, filters the noise, and delivers what matters to your bottom line.FAQWhat is AI2's Computer Use Agent?AI2's Computer Use Agent is an open-source AI tool designed to execute online tasks automatically, including form filling, navigation, and multi-step workflows.Can AI2's agent replace human workers?No. The agent handles repetitive, rule-based tasks but requires human oversight for complex decisions, security protocols, and error handling.Is AI2's Computer Use Agent free to use?Yes, as an open-source solution, the core functionality is freely available. However, enterprise implementation may require additional resources and customization.What industries benefit most from this agent?E-commerce, logistics, and B2B sales teams see the biggest gains from browser-based automation, though any workflow involving web interfaces can benefit.How does Scalexa help with AI agent adoption?Scalexa's AI News platform provides real-time tracking of AI developments, implementation guides, and strategic insights that help B2B leaders adopt emerging tools with confidence.

Why Ford's New AI Tool Just Made Traditional Truck Analytics Obsolete

Ford just dropped an AI bomb on the commercial vehicle industry—and most fleet managers don''t even know it yet. The automaker''s newest tool promises deep insights into its entire lineup of trucks and commercial vehicles, but here''s the uncomfortable truth: if you''re still relying on manual data collection and old-school analytics, you''re already behind.The manufacturer continues to support its truck business with AI technology advances, and this latest move signals a massive shift in how commercial vehicle fleets will be managed going forward. This isn''t just another software update—it''s a complete redefinition of what fleet intelligence looks like.The Gap Most Fleet Managers Don''t See ComingHere''s something that might make you uncomfortable: traditional CV analytics are essentially guessing games dressed up in spreadsheets. You''re collecting data manually, waiting weeks for reports, and making decisions based on incomplete information. Ford''s new AI tool changes that entire equation.The system offers real-time, deep-dive insights into vehicle performance, maintenance预测, route optimization, and driver behavior. Think of it as having a team of data scientists living inside every truck in your fleet—minus the salary and coffee breaks.Predictive maintenance alerts that catch issues before they become expensive breakdownsFuel consumption patterns analyzed at a granular level you''ve never seen beforeDriver performance scoring that actually makes sense of the dataRoute efficiency recommendations based on real-world conditions, not estimated averagesThis is the kind of insight that used to require expensive third-party platforms and months of integration work. Ford just made it native."The old way of managing commercial vehicle fleets is like using a compass when everyone else has GPS. You might get there eventually, but you''re burning unnecessary fuel along the way."Why This Matters Now More Than EverLet''s be blunt: the commercial vehicle industry is facing pressure from every angle. Fuel costs are unpredictable, driver shortages are chronic, and maintenance budgets are bleeding dry. The old methods aren''t just inefficient—they''re actively costing you money every single day.Ford''s AI tool addresses these pain points directly. By embedding intelligence directly into the vehicles'' systems, you get insights that are accurate, timely, and actionable. No more relying on driver self-reports. No more waiting for quarterly reports to discover a maintenance issue.The surprising insight here? Most fleet managers are so buried in daily operations that they haven''t even noticed AI becoming standard in their competitors'' vehicles. This adoption gap is widening fast, and the cost of falling behind is getting steeper by the month.The Integration Factor Nobody''s Talking AboutThis is where most articles stop—but we''re just getting started. The real power of Ford''s AI tool isn''t just the insights it generates; it''s how seamlessly it integrates with existing fleet management systems. You don''t need to rip and replace your current infrastructure.For platforms like Scalexa, this development is a game-changer. Scalexa''s AI News and analytics capabilities can now leverage Ford''s native vehicle intelligence, creating a layered insights system that was impossible before. You get Ford''s proprietary vehicle data working alongside Scalexa''s broader fleet intelligence—essentially doubling your analytical firepower.Native integration means zero data silosUnified dashboards show both vehicle health and operational efficiencyAutomated reporting that actually tells you what to do, not just what happenedIf you''re managing a fleet and not considering how AI-native vehicle data can transform your operations, you''re not managing a fleet—you''re just watching one run on borrowed time.What You Need to Do TomorrowThe strategy is simple, but the window is shrinking. Ford is rolling this AI tool across its commercial vehicle lineup, which means your competitors might already have access to insights you don''t. Here''s your action plan:First, verify your current Ford CVs are eligible for the AI integration. Second, assess how your existing fleet management platform can consume Ford''s data outputs. Third, if you''re using Scalexa, explore how the combined intelligence stack creates advantages your competitors likely haven''t thought of yet.The bottom line: AI in commercial vehicles isn''t the future—it''s the present, and Ford just raised the bar.Frequently Asked QuestionsWhat exactly does Ford''s new AI tool do?Ford''s AI tool provides in-depth, real-time insights into commercial vehicle performance, including predictive maintenance, fuel consumption analysis, driver behavior scoring, and route optimization recommendations. It essentially turns every connected vehicle into a continuous data source for fleet intelligence.Do I need special hardware to use Ford''s AI insights?No. The AI capabilities are built into Ford''s newer commercial vehicle models'' native systems. As long as your vehicles are equipped with Ford''s connectivity technology, the tool can access and analyze data without additional hardware installation.How does this compare to third-party fleet management solutions?Ford''s AI tool offers deeper, vehicle-specific insights because it accesses proprietary data directly from the manufacturer''s systems. Third-party solutions can complement this by aggregating data across multiple vehicle brands, but Ford''s native tool provides the most accurate picture of Ford-specific vehicle performance.Can I integrate Ford''s AI data with my existing fleet management platform?Yes. Ford has designed the tool with integration capabilities, allowing fleet managers to feed AI insights into their existing management systems. Platforms like Scalexa can leverage this data to create enhanced analytical layers.Is this available for all Ford commercial vehicles?The AI tool is being rolled out across Ford''s commercial vehicle lineup, but availability varies by model year and region. Contact your Ford representative to confirm which specific vehicles in your fleet are eligible for the integration.

Why Developers Were Secretly Using Claude Code for Vacation Planning — And What Anthropic Did Next