Our Tech & Review Collection

Explore all our latest insights, tutorials, and announcements on AI workflow and tech.

LFM2 vs. Llama 3.3: The Battle for the Pareto Frontier

Choosing Efficiency Over HypeIn this week’s AI News, the debate centers on the "Pareto Frontier" of AI—the perfect balance between quality and speed. While Llama 3.3 is a powerhouse, the LFM2 series dominates in prefill and decode throughput, especially on non-GPU hardware. At Scalexa, we’ve benchmarked these models and found that for math-heavy and long-context tasks, LFM2’s hybrid LIV (Linear Input-dependent Variable) operators provide a significant edge. Psychologically, this "Constant-Time" inference reduces the anxiety of scaling; your costs stay predictable even as your data grows. Scalexa helps you navigate these benchmarks to choose the engine that actually fits your hardware reality. Follow the latest technical reviews on Scalexa AI News.

MiniMax-M2.7 vs. Gemini 3.1: The Battle for Open-Source Reasoning Dominance

Benchmarking the BreakthroughIn this week’s AI News, MiniMax-M2.7 is making waves for tying with Google’s Gemini 3.1 in autonomous ML benchmarks. At Scalexa, we have tested M2.7’s performance in real-world software engineering, where it achieved a staggering 56.22% on SWE-Pro. What makes M2.7 psychologically superior for developers is its "Vibe-Pro" capability—an aesthetic and functional understanding of WebDev and AppDev that feels more human than robotic. You can run this powerhouse via the official Ollama library to experience its multi-language coding mastery in Rust, Go, and TypeScript. Scalexa helps you choose between these giants, ensuring you don't just follow the hype, but invest in the model that actually "thinks" the way your business needs. Stay updated with our AI News blog for deep-dive technical comparisons.

MiniMax-M2.7 vs. GPT-5.3: A Cost-Efficiency Breakdown for 2026

Frontier Intelligence at One-Third the CostIn this week’s AI News, the debate centers on the economics of intelligence. While GPT-5.3 remains a heavyweight, MiniMax-M2.7 is making waves by delivering equivalent reasoning power at less than one-third the operational cost. With an Elo score of 1495 on GDPval-AA, M2.7 has become the highest-rated open-source-accessible model for professional document processing. At Scalexa, we’ve benchmarked M2.7 against frontier models and found that its "Skill Adherence"—maintaining a 97% compliance rate across over 40 complex tasks—makes it the superior choice for high-volume B2B automation. Scalexa specializes in migrating businesses to these cost-efficient stacks, allowing you to scale your AI operations without the "Enterprise Tax" of more expensive providers. We turn high-level tech into a sustainable, high-ROI asset for your brand.

NVIDIA Nemotron-3-Super vs. Llama 3.3: Choosing the Right Engine for Your Workflows

The Battle of the Open WeightsIn this week’s AI News, the debate centers on NVIDIA’s Nemotron-3-Super versus Meta’s Llama 3.3. While Llama remains a versatile powerhouse, Nemotron-3-Super is built for "Throughput Excellence." At Scalexa, we have benchmarked these models and found that Nemotron’s hybrid Mamba-Transformer architecture delivers up to 7x faster inference for long reasoning sequences. For a high-volume brand, this isn''t just about being fast; it''s about the "Psychology of Momentum." When your team doesn''t have to wait for an AI to "think," their creative flow remains unbroken. Scalexa specializes in matching the right model to your specific business pain points. Whether you need the broad versatility of Llama or the surgical, high-speed reasoning of Nemotron, we ensure your tech stack is optimized for your unique growth path. At Scalexa, we don''t just follow trends; we engineer the performance that drives them. Model Mastery: Solving the Context Explosion [interlink(149)] and Nemotron vs. Llama 3.3 [interlink(150)].

Cognitive Density: Why the "Reasoning" of GPT-5.3 and Gemini 3.1 Changes Everything

Quality Over Parameter CountsIn the latest AI News, the focus has shifted from the size of a model to its "Cognitive Density." Models like Google’s Gemini 3.1 Pro and OpenAI’s GPT-5.3 are now doubling scores on advanced reasoning benchmarks, meaning they finally "understand" complex chains of logic. At Scalexa, we use this enhanced reasoning to automate high-stakes tasks like legal document review and intricate financial modeling. The psychological barrier to AI adoption has always been the "Hallucination Fear," but with these new reasoning capabilities, that fear is dissolving. Scalexa leverages this "Adaptive Thinking" to build systems that know when to answer instantly and when to "think" longer on a complex problem. We don''t just give you a chatbot; we give you a dependable core operational asset that reasons as well as your best senior analyst. Model Mastery: NVIDIA Nemotron-3-Super review [interlink(148)] and solving the AI hallucination problem [interlink(93)].

The 2026 React Stack: Scaling AI-Native Web Apps with Scalexa

AI-Native Frontend EngineeringAs AI News reports, the "React Stack for 2026" has evolved to place AI at the very core of the application logic. At Scalexa, we are building web apps that are no longer just rule-based systems but autonomous, goal-driven structures. By leveraging meta-frameworks like Next.js 16 and the latest React Compiler, we ensure that your UI is dynamically generated based on real-time user behavior and environmental data. This "Predictive UX" anticipates user needs, reducing friction and significantly improving Core Web Vitals. Scalexa’s expertise in "Vibe Coding"—where outcomes are described in natural language and then refined by AI—allows our team to focus on high-level system architecture while the AI scaffolds the mechanical boilerplate. This approach triples productivity, allowing Scalexa to deliver enterprise-grade MVPs to the edge in record time.Zero-Trust and Sustainable CodingSecurity and sustainability are the twin pillars of the 2026 Scalexa stack. We implement "Zero-Trust Security" by default, ensuring that every request within your AI-native app is verified for identity and intent. Furthermore, Scalexa is pioneering "Green Coding" practices to minimize the environmental impact of compute-heavy AI features. By optimizing server functions and moving logic to the edge, we reduce the carbon footprint of your digital assets without sacrificing performance. As AI News highlights the growing demand for ethical and inclusive web design, Scalexa ensures your application is accessible to everyone by default. We don''t just build for the web; we build for a future where high-performance technology and human values are perfectly aligned. Scalexa is your guide to the ultimate 2026 technical foundation. Frontend Power: React Server Components guide [interlink(109)] and the core Scalexa stack [interlink(18)].

The 2026 React Stack: Scaling AI-Native Web Apps with Scalexa

AI-Native Frontend EngineeringAs AI News reports, the "React Stack for 2026" has evolved to place AI at the very core of the application logic. At Scalexa, we are building web apps that are no longer just rule-based systems but autonomous, goal-driven structures. By leveraging meta-frameworks like Next.js 16 and the latest React Compiler, we ensure that your UI is dynamically generated based on real-time user behavior and environmental data. This "Predictive UX" anticipates user needs, reducing friction and significantly improving Core Web Vitals. Scalexa’s expertise in "Vibe Coding"—where outcomes are described in natural language and then refined by AI—allows our team to focus on high-level system architecture while the AI scaffolds the mechanical boilerplate. This approach triples productivity, allowing Scalexa to deliver enterprise-grade MVPs to the edge in record time.Zero-Trust and Sustainable CodingSecurity and sustainability are the twin pillars of the 2026 Scalexa stack. We implement "Zero-Trust Security" by default, ensuring that every request within your AI-native app is verified for identity and intent. Furthermore, Scalexa is pioneering "Green Coding" practices to minimize the environmental impact of compute-heavy AI features. By optimizing server functions and moving logic to the edge, we reduce the carbon footprint of your digital assets without sacrificing performance. As AI News highlights the growing demand for ethical and inclusive web design, Scalexa ensures your application is accessible to everyone by default. We don''t just build for the web; we build for a future where high-performance technology and human values are perfectly aligned. Scalexa is your guide to the ultimate 2026 technical foundation. Frontend Power: React Server Components guide [interlink(109)] and the core Scalexa stack [interlink(18)].

The Best AI Coding Assistants of 2026: From Cursor to Google Antigravity

AI-First Editors vs. Traditional PluginsIn the fast-evolving world of development, AI News in 2026 is centered on the dominance of AI-first editors. While plugins like GitHub Copilot remain popular for boilerplate generation, tools like Cursor and Google Antigravity have redefined the workflow by maintaining awareness of the entire codebase rather than just a single file. At Scalexa, we have integrated these "Agentic Editors" into our full-stack pipeline, allowing our developers to describe complex refactors in natural language that the AI then applies across dozens of files simultaneously. These tools function as autonomous partners that operate across the editor, terminal, and browser. For a technical agency like Scalexa, this translates to a 50% faster deployment cycle for enterprise-level applications. However, the true value lies in logical analysis; tools like Qodo are now being used to perform deep architectural reviews, catching bugs and security vulnerabilities before they ever reach a production environment.The Agentic Management InterfaceModern editors now feature built-in agent management interfaces, letting developers delegate entire multi-step tasks to AI agents. AI News highlights "Windsurf" and "Cline" as leaders in this space, providing visual artifacts of progress rather than raw logs. Scalexa leverages these agentic workflows to handle legacy codebases, allowing our team to plan and implement features in unfamiliar environments with unprecedented speed. This isn''t just about typing faster; it''s about elevating the developer to the role of a "Systems Architect." By mastering these next-gen IDEs, Scalexa ensures that our full-stack solutions are not only built faster but are also more reliable and easier to maintain. We turn the raw power of AI into a structured, professional engineering asset for every client project. Dev Efficiency: The developer toolkit review [interlink(23)] and the Scalexa tech stack [interlink(18)].

The Verification Crisis: Scalexa’s Strategy for the New AI Trust Economy

The Cost of AI AccuracyA profound shift is discussed in current AI News: the marginal cost of executing a task is nearing zero, but the cost of verifying it is the new expensive bottleneck. Scalexa calls this the "Verification Crisis." Whether it is AI-generated code, legal audits, or medical diagnosis, a senior human expert must still spend time auditing the output for safety and accuracy. This shift has given rise to "Liability-as-a-Service" (LaaS), where companies like Scalexa don''t just provide tools, but legally underwrite the outcomes of their AI. We use "Cryptographic Provenance" to provide a digital birth certificate for every piece of content, ensuring its authenticity in an age of deepfakes. As AI News continues to expose the dangers of "Shadow AI," Scalexa provides the secure, auditable frameworks that B2B brands need to maintain their reputation and trust in a high-speed digital economy.Solving the "Missing Junior Loop"One of the most engaging topics in AI News is the "Missing Junior Loop"—the concern that automating entry-level tasks destroys the apprenticeship phase for new workers. If juniors aren''t practicing on routine tasks, how will they become the senior experts of tomorrow? Scalexa is solving this by redesigning entry-level roles as "AI Auditors." In these roles, juniors spend their time reverse-engineering and verifying AI outputs, gaining a deeper technical understanding than traditional manual work provided. This ensures that Scalexa clients always have a pipeline of skilled humans who can oversee the machines. We believe the future belongs to "AI-capable teams" who master the art of verification. By focusing on trust and accountability, Scalexa turns the potential liability of AI into a structured, verifiable asset for enterprise growth. Trust Building: Implement cryptographic provenance for your content: [interlink(96)] and learn to combat Shadow AI:.

The Economics of AI-First SaaS: Why Usage-Based Pricing is the New Standard

The End of Per-Seat SubscriptionsIn this week’s AI News, we examine a seismic shift in the SaaS industry: the death of the "per-seat" pricing model. Traditional software had high margins because serving extra users was cheap, but AI-native software is different. Every time an AI feature is used, it incurs a direct computational cost for the provider. At Scalexa, we are seeing 2026 software vendors move toward usage-based and "Outcome-Oriented" pricing. This means you pay for the value the AI creates—such as the number of tickets resolved or the amount of revenue generated—rather than just the number of employees with a login. This alignment of cost and value is a win for SMBs, as it ensures they only pay for what they actually use. Scalexa helps businesses audit their software stack to identify these "AI-First" tools that offer better ROI than bloated, legacy platforms.The Inflection Point of Native AIWe are moving past "AI as a feature" into the era of "Native AI." As reported by AI News, 80% of enterprises will have deployed AI-enabled applications by the end of 2026. Scalexa specializes in migrating businesses from traditional, database-centric CRUD apps to intelligent systems that prioritize autonomy. These native-AI platforms don''t just store data; they act on it. Whether it is an AI-driven CRM that predicts churn before a customer complains, or a revenue forecasting tool with built-in confidence intervals, the modern B2B toolkit is designed to perform the work, not just enable it. Scalexa is your partner in this transition, ensuring your software investments are scalable, sustainable, and fundamentally intelligent. Strategic Transition: AI consulting for enterprise evolution [interlink(16)] and the Chief AI Architect role [interlink(118)].

The Verification Crisis: Scalexa’s Guide to the New Economics of AI Trust

The Hidden Cost of "Free" ExecutionAs reported in recent AI News, the marginal cost of executing cognitive tasks is plummeting toward zero, but a new bottleneck has appeared: the cost of verification. At Scalexa, we call this the "Verification Crisis." While AI can generate thousands of lines of code or complex financial reports in seconds, the time required for a human expert to audit that output for accuracy and safety is becoming the new expensive commodity. This shift is also creating a "Missing Junior Loop" in the workforce. Historically, entry-level juniors learned their craft by performing the routine tasks that AI now handles. If we automate away the apprenticeship phase, we risk losing the pipeline of senior experts needed to oversee future AI systems. Scalexa is pioneering new "Auditor Roles" for juniors, ensuring they gain deep technical insights by reverse-engineering AI outputs rather than just consuming them.Provenance and Liability-as-a-ServicePredicting the macro trends of 2026, Scalexa sees a shift toward "Liability-as-a-Service." Future business models will move from selling software to monetizing trust. Companies will need to prove content authenticity through cryptographic provenance—essentially a digital "birth certificate" for every piece of content. As AI News continues to highlight the dangers of deepfakes and misinformation, Scalexa is helping businesses legally underwrite the risks of their AI failures by implementing robust provenance tracking. This technical layer is essential for B2B brands that must guarantee the integrity of their data in a world where seeing is no longer believing. By bridging the gap between automated speed and human-verified trust, Scalexa ensures your tech stack remains a reliable asset for high-volume operations. Trust Economy: Solving the Missing Junior Loop [interlink(107)] and cryptographic provenance [interlink(96)].

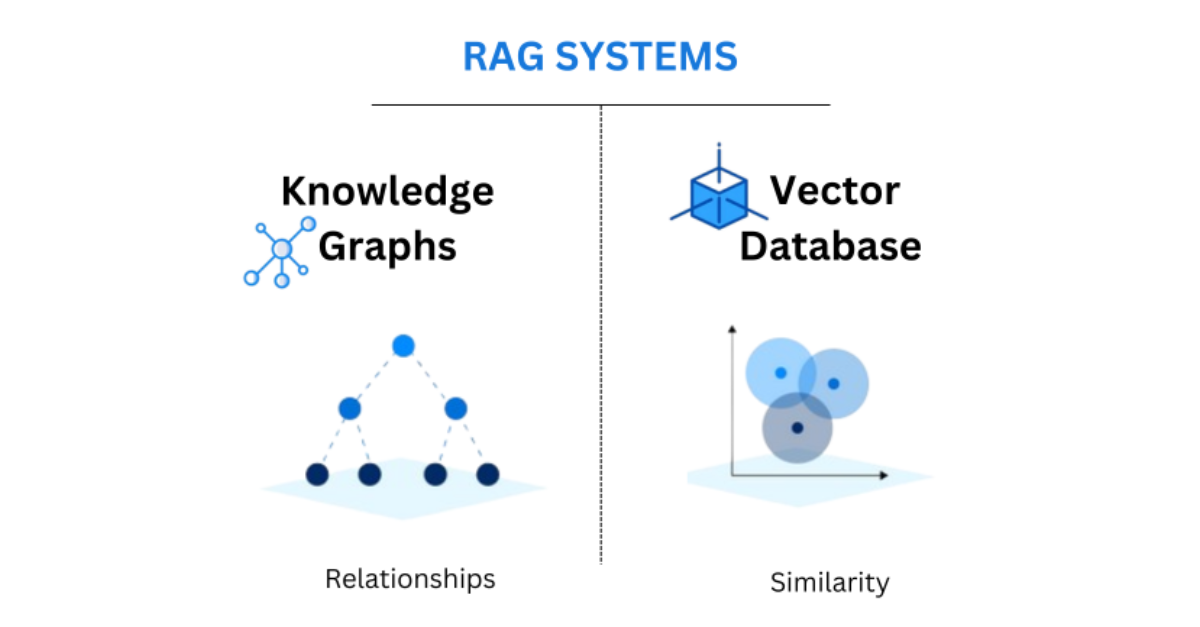

The Backbone of Memory: A Deep Dive into Vector Databases and RAG Architecture

Solving the AI "Hallucination" ProblemOne of the most frequent topics in AI News at Scalexa is the refinement of Retrieval-Augmented Generation (RAG). While early AI models often suffered from "hallucinations"—confidently stating false information—modern RAG architectures solve this by connecting the LLM to a high-speed Vector Database like Pinecone, Milvus, or Weaviate. This allows the AI to "look up" facts in your company''s private documentation before generating an answer. For a technical agency like Scalexa, this means building support bots and internal search engines that are 100% accurate because they are grounded in real-time, verified data. The transition from general AI to "Context-Aware AI" is the single biggest factor in enterprise adoption today. By storing your data as high-dimensional vectors, you enable the AI to understand semantic relationships between concepts, rather than just matching keywords.Optimizing the Technical StackChoosing the right vector database is a critical decision for your tech stack. We have reviewed the performance of managed solutions versus self-hosted instances, and for high-volume e-commerce, the latency of your vector search is just as important as your page load speed. Scalexa specializes in optimizing these data pipelines, ensuring that your AI can retrieve the right information in milliseconds. As we move further into 2026, the ability to give your AI a "long-term memory" through advanced vector storage will be the differentiator between a simple chatbot and a true digital assistant. Stay tuned to Scalexa for more deep dives into the infrastructure powering the next generation of intelligent software. AI Infrastructure: Leveraging private data for custom LLMs [interlink(13)] and the 2026 AI News roadmap [interlink(90)].